From POC to Production: Why a Scalable Platform is Essential

Although many companies are exploring Artificial Intelligence (AI), pilot projects (POCs) often remain, which are never transitioned into productive operation. The reason for this is rarely the technology itself, but rather the lack of architectural maturity, insufficient integration into existing processes, and the absence of a sound operating model.

For the productive use of AI, more is needed than just a model with good metrics. An architecture is required that brings together security, scalability, monitoring, data management, contextual understanding, and governance, while being aligned with regulatory requirements, user groups, and IT environments. Only in this way can business value, efficiency, and compliance be equally ensured.

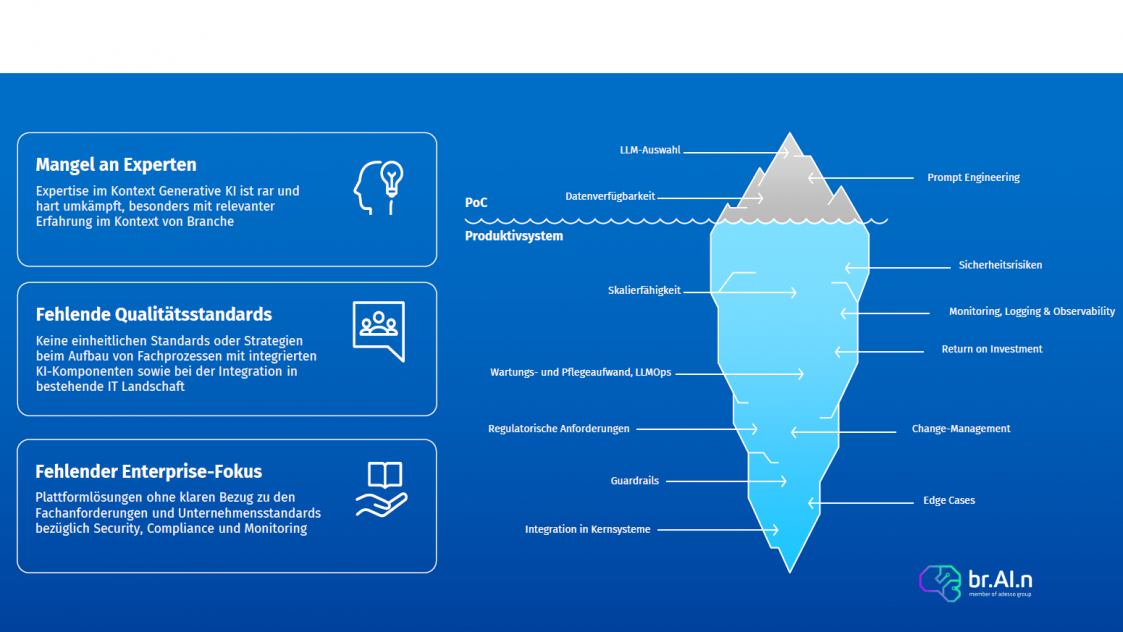

As shown in the figure above, for a Proof of Concept (PoC), usually only data availability, prompt engineering, and selection of the appropriate LLM are crucial. However, this is far from sufficient for a production deployment. These aspects merely form the tip of the iceberg.

A robust and production-ready solution must cover significantly more, especially:

Only when all these dimensions are taken into account can Generative AI be operated reliably, safely, and economically within the company.

This architecture can be realized in various ways. However, to ensure that the above-mentioned aspects are covered while keeping the time-to-production for standard use cases within weeks rather than months – a robust platform is indispensable.

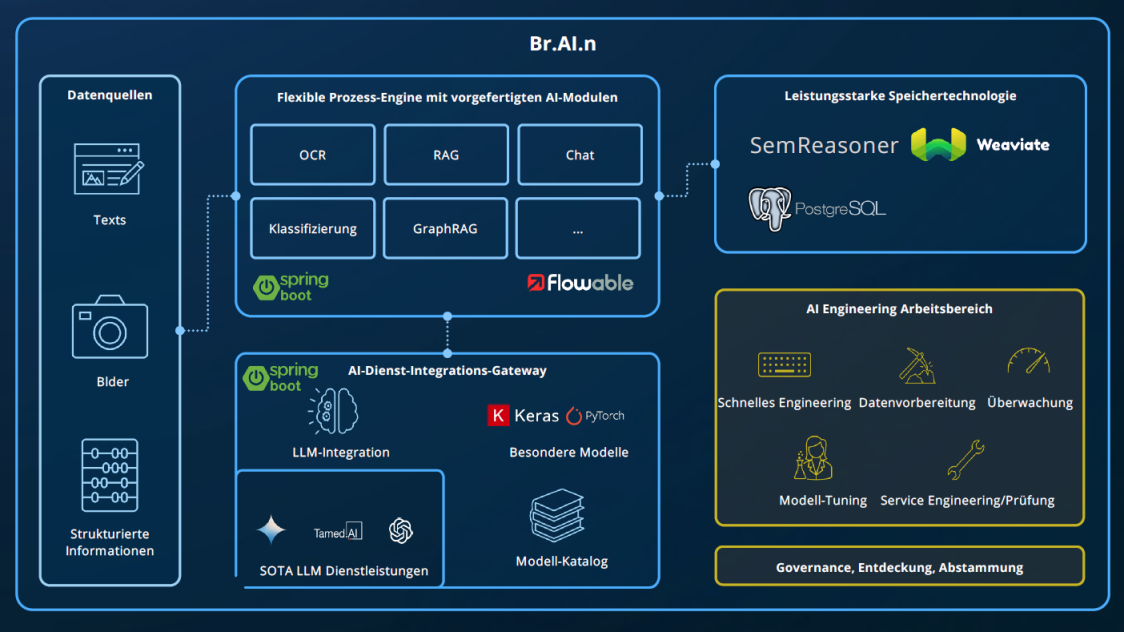

The use cases described below were developed with the Platform br.AI.n from now on realized – a Java-based enterprise low-code platform that enables the creation of generative AI workflows with configurable standard building blocks in BPMN 2.0 notation.

The br.AI.n platform connects all the components necessary for a scalable, secure, and production-ready Generative AI implementationFrom data ingestion, through modular AI services, powerful storage and retrieval technologies, to an engineering workspace for data preparation, tuning, and governance - all orchestrated via standardized process models and integrally expandable.

evia is an integration and distribution partner of br.AI.n, because: Even with the best platform, the right expertise and experienced resources are needed to unlock its full potential.

In the previous blog post, we already discussed a Use case for automated application data collection presented, which was realized together with the Investitionsbank Schleswig-Holstein (IB.SH) based on the br.AI.n platform. This time, we take a look at two further application scenarios: Firstly, the checking of contracts for conformity with the DORA regulation, and secondly, the verification of supplier documents for compliance with the Code of Conduct of a major customer.

Automated Contract Review with DORA: Digital Operational Resilience in Practice

The EU's DORA Regulation (Digital Operational Resilience Act) requires all financial companies – including banks, insurance companies, and asset management companies – to have a comprehensive framework for digital resilience since 2025. The goal is to ensure that critical business processes remain stable even in the event of cyber attacks, system failures, or disruptions at third-party providers.

A central component of DORA is contract and supplier management. For example, contracts with IT and cloud service providers must meet clear requirements, such as those relating to:

Since contracts are often very extensive and structured differently, the Manual inspection extremely complex and error-prone. This is where the br.AI.n platform comes in, by automatically analyzing contracts, identifying relevant clauses, and checking their compliance with DORA's regulatory requirements.

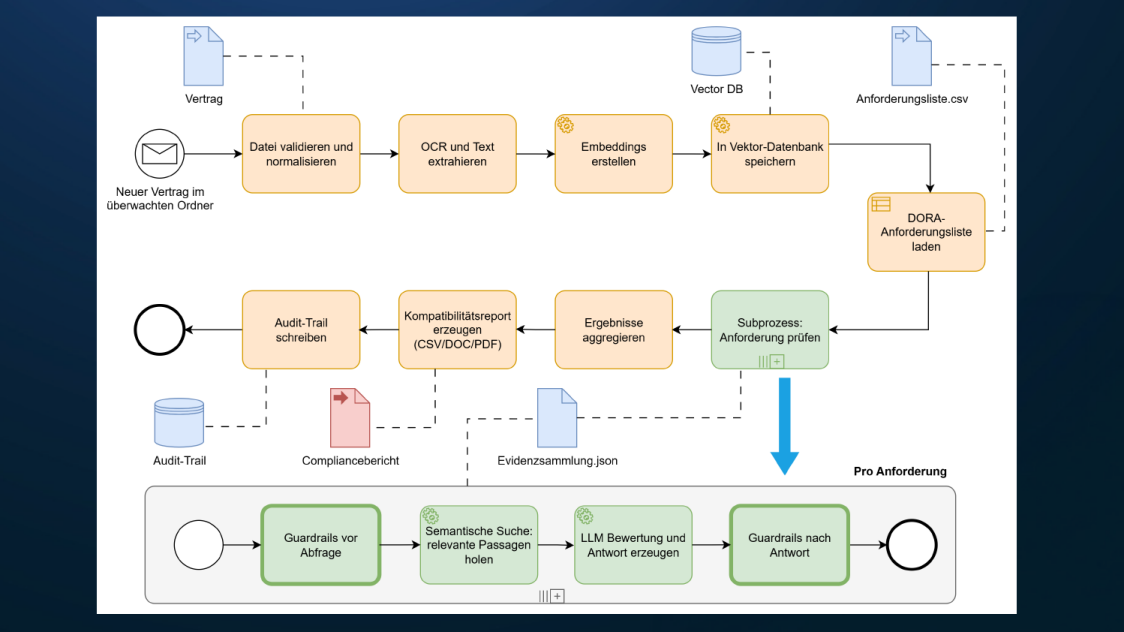

The diagram shows a rough, high-level diagram of the process modeled in BPMN for verifying DORA compliance. The productive workflow includes additional checks, gateways, error handling, and escalations. However, the simplified example effectively conveys how the solution works. The modeled workflow translates directly into a runnable backend with REST APIs. This backend is deployed and connected to a custom-developed or existing UI.

Core logic in bullet points

What makes the implementation so straightforward

The BPMN definition runs on the br.AI.n platform with a BPMN engine. Each task is stored as a service task or call activity and invokes the corresponding backend services, such as OCR, Embedding Service, Retrieval, LLM Scoring, Reporting. The engine handles orchestration, error and retry logic, instance correlation, as well as persistence of states and audit logs. The workflow can be started, monitored, and analyzed from a UI via REST APIs.

The diagram is not just documentation. It is the specification of the productive process and therefore the basis for a comprehensible, scalable, and auditable system. The combination of BPMN orchestration, Vector DB retrieval, LLM evaluation, and guardrails creates reproducible results with clear evidence. This is precisely what is essential for DORA and allows the transition from a POC to a robust enterprise-level solution.

Operation and Deployment

The solution can be deployed flexibly: in the public cloud, in German sovereign cloud environments, and fully on-premises in the data center. Especially in the DORA-relevant financial sector, data residency, control, and auditability are central. Regardless of the operating model, APIs and functionality remain identical, and Infrastructure as Code simplifies rollout and maintenance. Network isolation, key management, and integration with existing security and GRC processes are included.

Automated CoC inspection for suppliers

A very similar practical use case: ensuring that the codes of conduct of a large industrial company's suppliers align in content with the buyer's CoC. This is important for legal requirements, reputation protection, ESG (Environmental, Social, and Governance) guidelines, and procurement policies. Deviations can lead to audit findings, contractual risks, and supplier debarment.

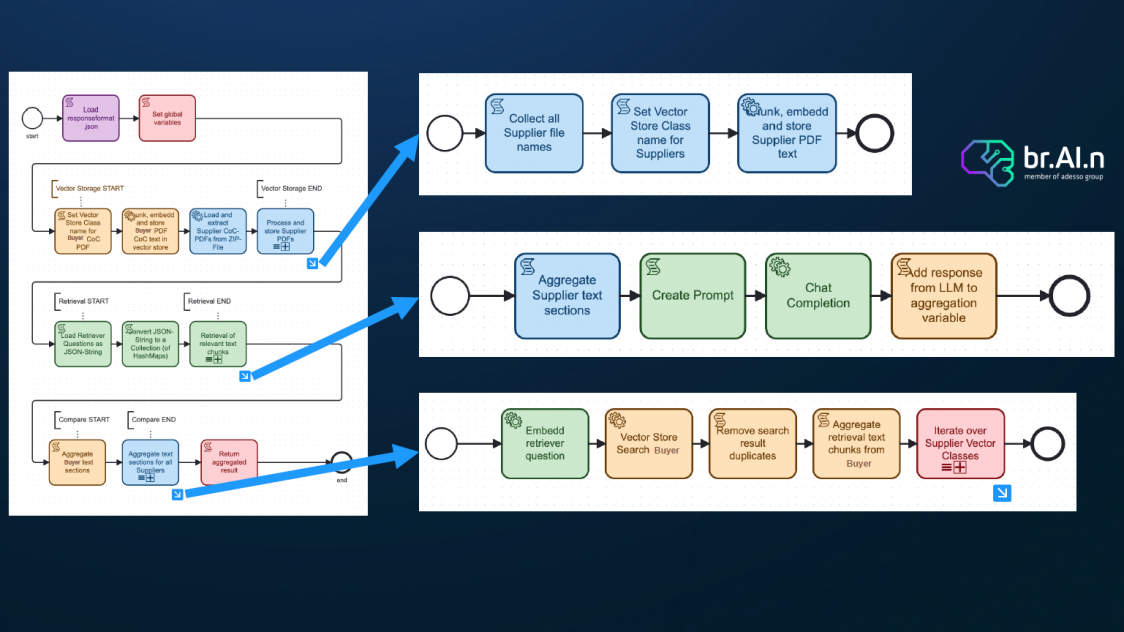

The essential difference to DORA: there is no formalized catalog of paragraphs here. Instead, the AI extracts the relevant guiding principles and control points from the buyer's CoC and compares them semantically with the suppliers' CoCs, thus recognizing statements with the same meaning stated differently or gaps.

Robust approach based on embeddings

High-level BPMN diagram

Why not just put both CoCs into a strong LLM with a large context window?

Because an LLM-only approach quickly becomes unscalable and expensive with the length and number of documents, results are difficult to reproduce, and evidence tracking suffers. With embeddings and a vector store, we retrieve only the truly relevant passages for each question, reducing tokens and costs, lowering hallucinations, anchoring every statement to evidence points with citations, enabling consistent point-by-point matching even with differently phrased semantics, reusing indexes for new suppliers or updates, and fulfilling audit and compliance requirements through stable, traceable pipelines. In short, Retrieval augmented generation supplies more precise, cheaper, reproducible, and auditable, especially with many CoCs.

Regulatory certainty and reproducibility: EU AI Act and ISO 42001 with Validator

Especially in regulated industries, the pressure to operate AI in a comprehensible, secure, and auditable manner is growing. To ensure our solution meets the requirements of the EU AI Act and ISO 42001 while delivering consistent, accurate results, we are integrating Validator. The tool combines automated Compliance, Governance and Testing of AI solutions and models. Result: audit-proof evidence, reproducible quality, and stable results, regardless of LLM or infrastructure changes.